Overview of RLinf-USER

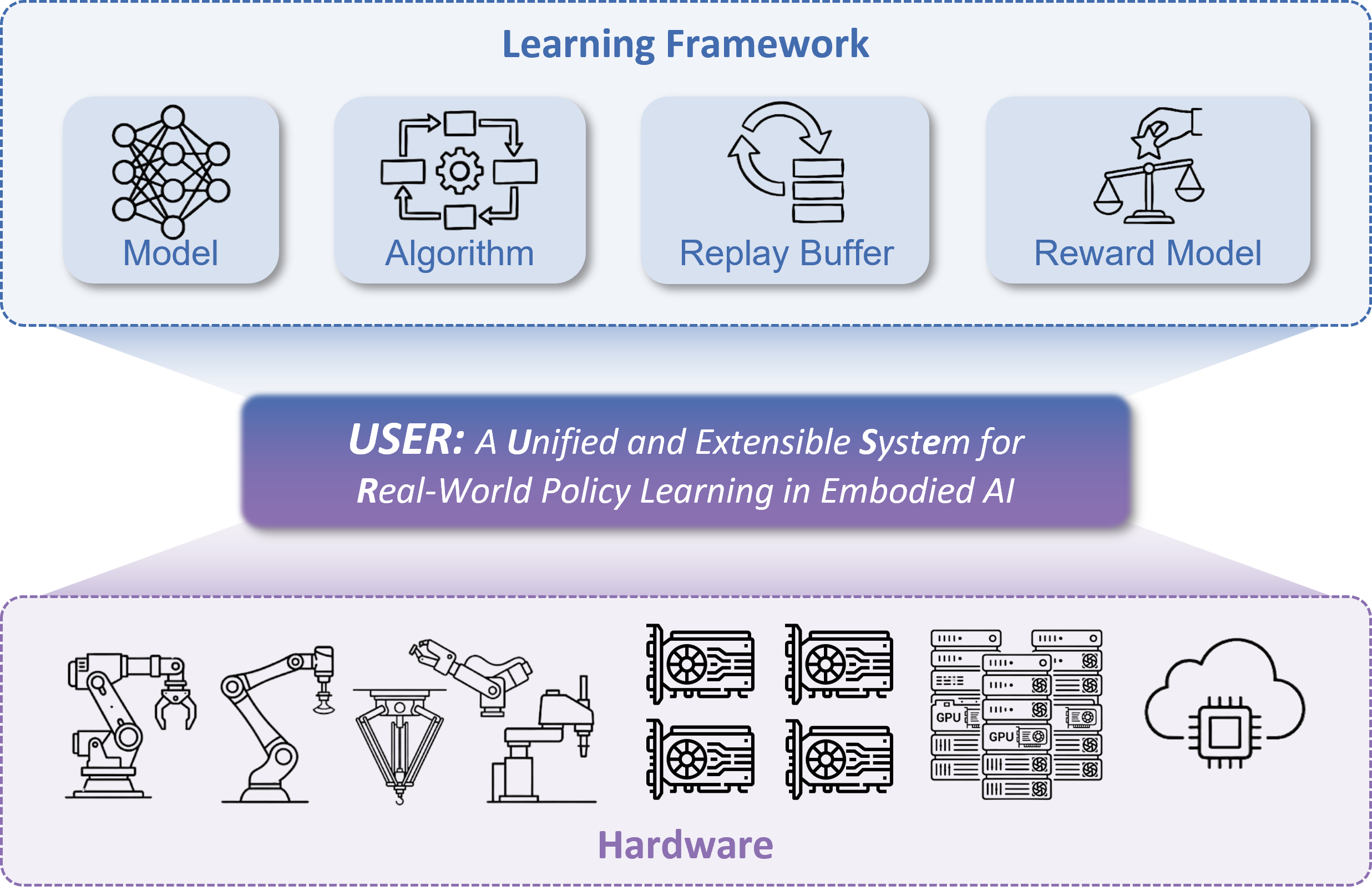

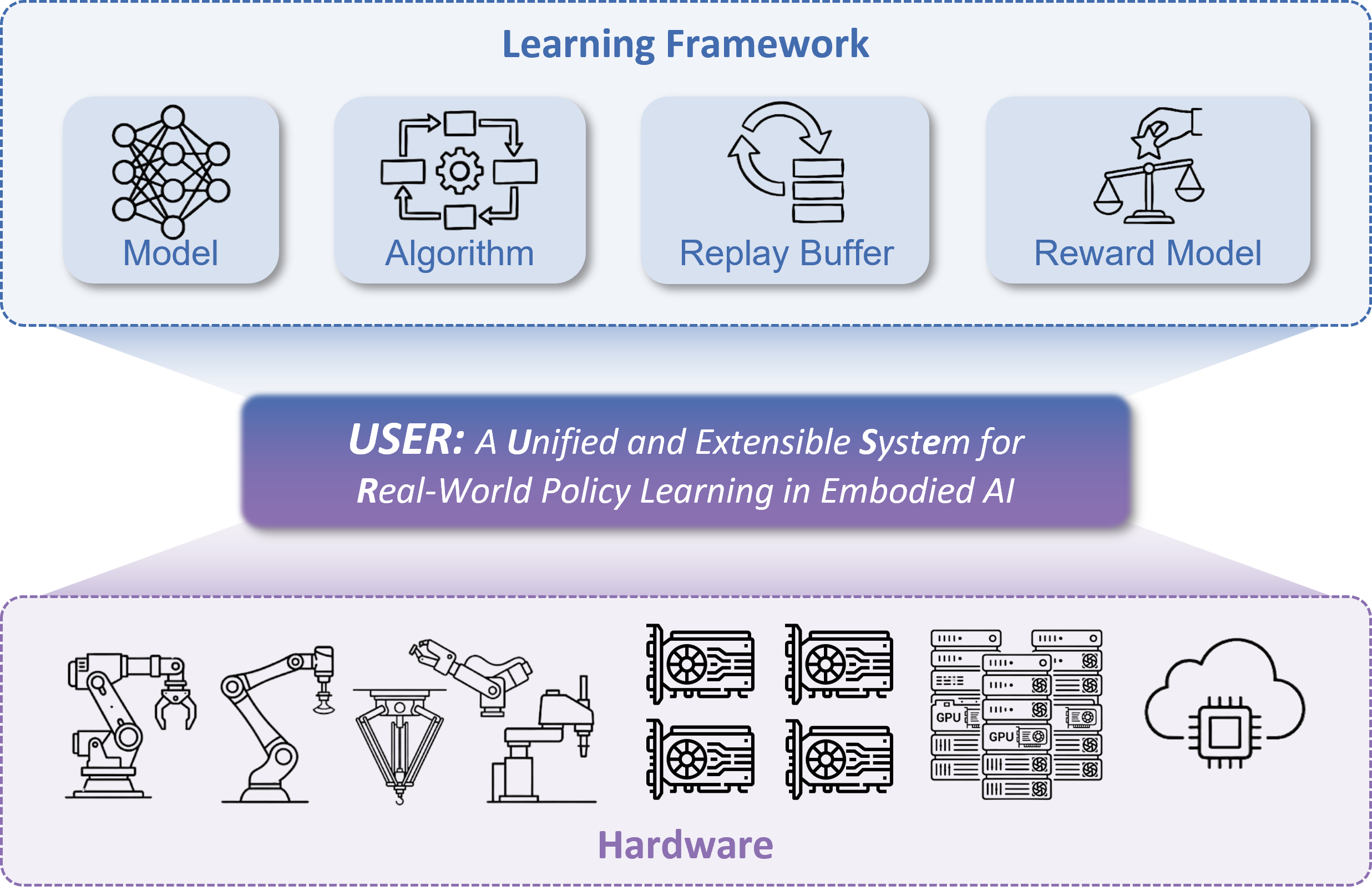

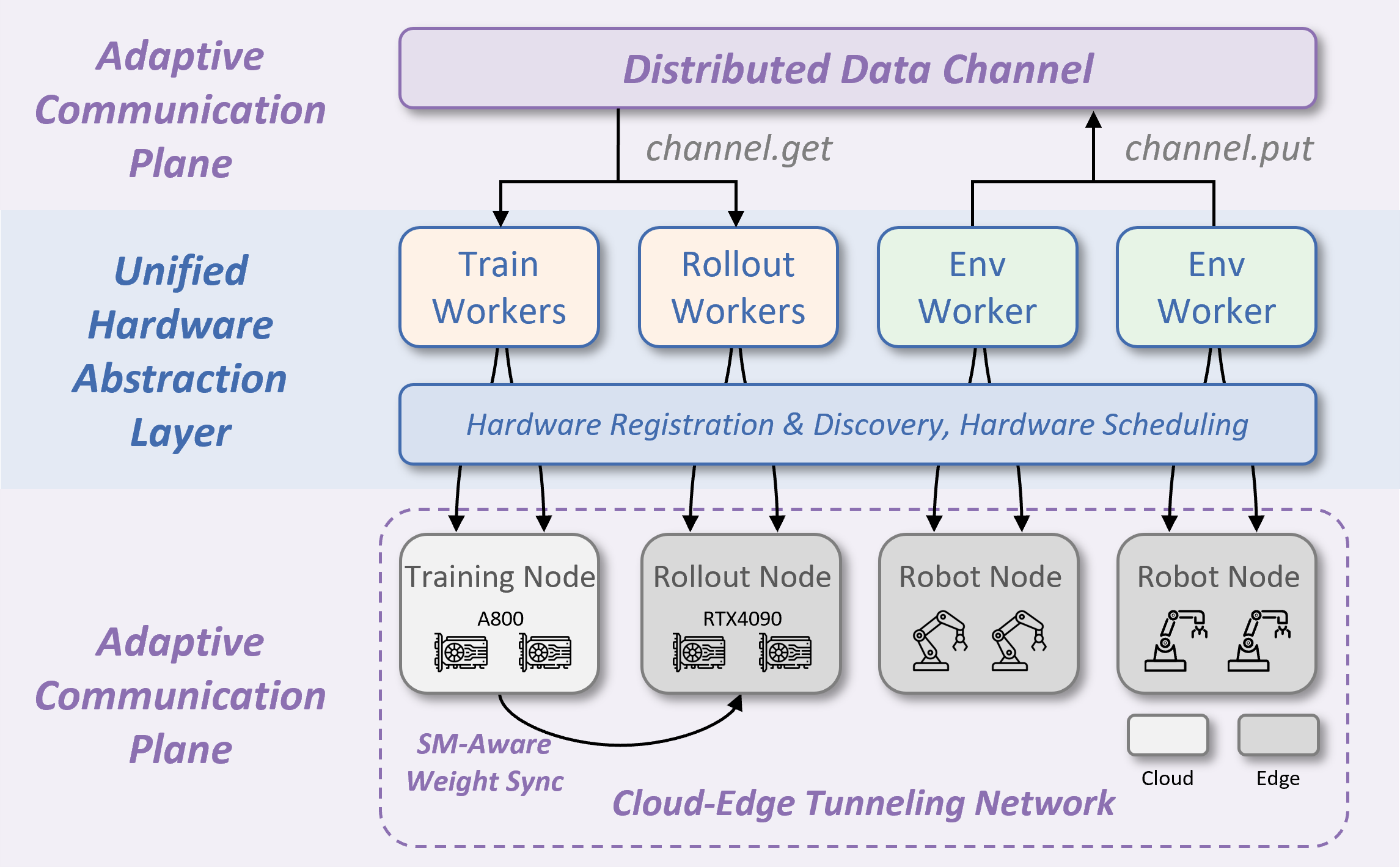

Online policy learning directly in the physical world is a promising yet challenging direction for embodied intelligence. Unlike simulation, real-world systems cannot be arbitrarily accelerated, cheaply reset, or massively replicated, which makes scalable data collection, heterogeneous deployment, and longhorizon effective training difficult. These challenges suggest that real-world policy learning is not only an algorithmic issue but fundamentally a systems problem. We present USER, a Unified and extensible SystEm for Real-world online policy learning. USER treats physical robots as first-class hardware resources alongside GPUs through a unified hardware abstraction layer, enabling automatic discovery, management, and scheduling of heterogeneous robots. To address cloud–edge communication, USER introduces an adaptive communication plane with tunnelingbased networking, distributed data channels for traffic localization, and streaming-multiprocessor-aware weight synchronization to regulate GPU-side overhead. On top of this infrastructure, USER organizes learning as a fully asynchronous framework with a persistent, cache-aware buffer, enabling efficient long-horizon experiments with robust crash recovery and reuse of historical data. In addition, USER provides extensible abstractions for rewards, algorithms, and policies, supporting online imitation or reinforcement learning of CNN/MLP, generative policies, and large vision–language–action (VLA) models within a unified pipeline. Results in both simulation and the real world show that USER enables multi-robot coordination, heterogeneous manipulators, edge–cloud collaboration with large models, and longrunning asynchronous training, offering a unified and extensible systems foundation for real-world online policy learning. Our code is available in here.

Here is a fully asynchronous real-world learning pipeline with a persistent, cache-aware buffer and extensible abstractions for policies, algorithms, and reward models.

@article{zang2026rlinf,

title={RLinf-USER: A Unified and Extensible System for Real-World Online Policy Learning in Embodied AI},

author={Zang, Hongzhi and Yu, Shu'ang and Lin, Hao and Zhou, Tianxing and Huang, Zefang and Guo, Zhen and Xu, Xin and Zhou, Jiakai and Sheng, Yuze and Zhang, Shizhe and others},

journal={arXiv preprint arXiv:2602.07837},

year={2026}

}